Most companies approach AI the wrong way. They buy the best software. They hire developers. They launch a pilot program. Then nothing changes.

Teams still work the same way. Managers are confused. Employees are afraid. And leadership wonders why their AI investment is not paying off.

The truth is simple. AI transformation not technology problem. It is a people problem. A culture problem. A governance problem.

This guide explains why AI projects fail and what you can do to make yours succeed.

What ‘AI Transformation Not Technology Problem’ Actually Means

AI transformation is the process of rethinking how a business operates using artificial intelligence to change decisions, workflows, and outcomes. It is not the same as buying AI software and plugging it in.

The phrase ‘AI transformation not technology problem’ captures something many organizations learn too late. When an AI initiative struggles, the first instinct is to blame the tool. A different vendor. A better model. More compute power. But the tool is rarely the reason things break down.

The real blockers sit elsewhere:

- How open people in the organization are to doing their jobs differently

- Whether leadership has set a clear goal that AI is meant to serve

- How well teams understand what AI can and cannot do for them

- Whether existing data is reliable enough to build anything on

- Whether workflows have been redesigned, or just automated as they are

Think of it this way: AI is the engine. But an engine without a steering wheel, a driver, and a destination does not get you anywhere.

What AI Transformation Looks Like When It Works and When It Doesn’t

Before going into what causes AI transformation to fail, it helps to understand what the two paths actually look like in practice.

| When AI Transformation Works | When It Doesn’t |

| Clear business goal defined before selecting a tool | Tool purchased first, purpose figured out later |

| Employees trained on what AI will change about their role | Staff told AI is being introduced, no further context |

| Processes redesigned around AI’s strengths | AI bolted onto existing broken workflows |

| Data cleaned and governed before deployment | AI fed inconsistent, siloed, or outdated data |

| Managers act as adoption champions | Managers feel threatened and create quiet friction |

| Governance built in from day one | Governance treated as a compliance afterthought |

| Progress measured in business outcomes | Success measured in tools deployed or licenses bought |

Why Most AI Transformations Fail (And It Has Nothing to Do With the Tech)

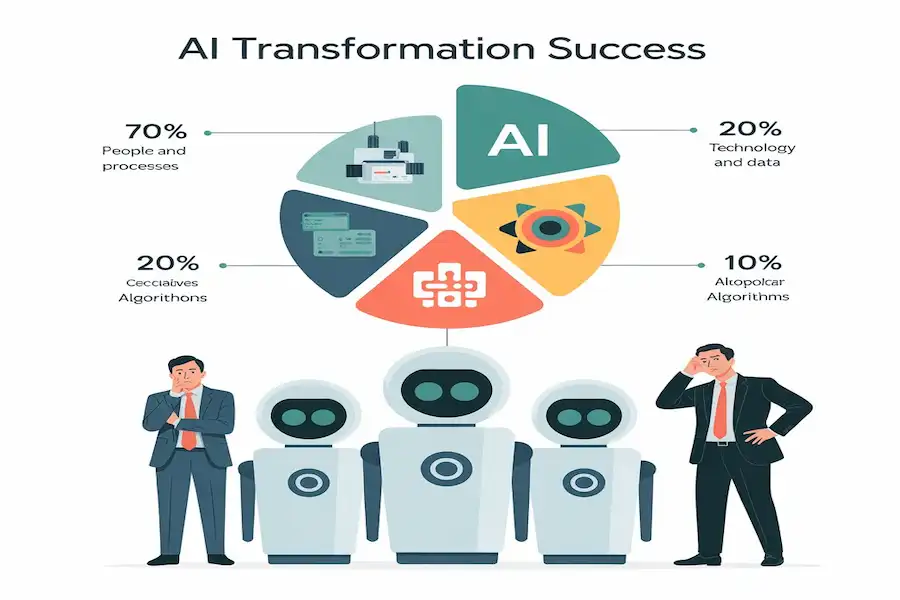

The BCG report ‘Where’s the Value in AI?’ surveyed over 1,000 executives and found something that should make every organization rethink its AI budget allocation. Companies that succeed at AI transformation put 70% of their effort into people and processes, 20% into technology and data, and just 10% into algorithms. Most struggling companies do the opposite.

Their conclusion was direct: organizations that prioritize technical issues over human ones almost always underdeliver on AI.

The same research found that 74% of companies had not yet unlocked meaningful value from their AI investments despite years of spending and hiring. As follow-up, that picture had barely improved. Sixty percent of companies reported no material gains from AI at all.

Here is what is actually going wrong:

Buying Tools Before Defining the Problem

Many organizations select AI platforms during budget season and then ask teams to justify them afterwards. This approach puts the technology ahead of the question it is supposed to answer.

The organizations in BCG’s AI leader group do the opposite. They identify a specific business problem first say, reducing customer churn or cutting invoice processing time and then find the right AI capability to address it. Fewer initiatives, but ones that actually deliver.

Source: Medium .com

Treating AI Like Standard Software

Conventional software does what you tell it. You set the logic, it runs the logic. AI does not work that way. AI systems learn from data, produce outputs that shift over time, and can behave unpredictably when fed inputs they were not trained on.

Organizations that manage AI the same way they manage their CRM or their HR platform end up with neither proper oversight nor meaningful results. The governance model needs to match how AI actually behaves.

Rushing from Pilot to Scale

BCG’s research specifically named ‘pilot purgatory’ as one of the most common traps in AI adoption. Teams run promising experiments, get good results in controlled conditions, and then try to scale before the underlying conditions data quality, process design, team readiness are solid enough to support it.

Every premature scale-up creates noise, frustration, and a growing belief inside the organization that AI does not work. That belief becomes its own barrier

AI Transformation vs Digital Transformation What Is the Actual Difference?

These terms are often used interchangeably. They should not be.

| Digital Transformation | AI Transformation |

| Broader shift to technology-based operations | A specific layer within digital transformation |

| Covers cloud, mobile, data systems, automation | Focuses on embedding intelligence into decisions |

| Changes what tools the business uses | Changes how the business reasons and adapts |

| Primarily a process and infrastructure shift | A shift in organizational identity and judgment |

| Often treated as a project with an end date | Continuous AI learns and evolves over time |

Digital transformation updated the infrastructure. AI transformation asks a harder question: now that the infrastructure exists, can we change how the organization thinks?

That second question is where most organizations underestimate the work involved. Infrastructure can be built. Thinking takes longer to change.

Real Problem: People, Culture and Leadership

The three things that most reliably block AI transformation are not on any technology vendor’s spec sheet. They are:

Leadership That Approves AI but Does Not Own It

The pattern is common. A senior leader champions AI in a quarterly review, approves a budget, and hands it to IT. Three months later, IT is waiting on priorities, business units are not aligned, and no one can say who is accountable for outcomes.

AI transformation requires a single owner who sits close enough to business strategy to make real trade-offs. Some organizations appoint a Chief AI Officer. Others form an AI Steering Committee with cross-functional decision-making authority. The structure matters less than the clarity: someone must own this with full accountability.

Culture That Punishes Failure

BCG’s AI at Work survey found that 46% of employees at organizations undergoing comprehensive AI redesign reported increased worry about job security compared to 34% at less-advanced companies. That anxiety does not disappear when the training sessions end.

When people fear being wrong, they do not experiment. When they do not experiment, AI tools sit unused. Analysis by INSEAD of real corporate implementations found that employees consistently completed required AI training and then returned to their previous methods not out of laziness, but because the culture had not made experimentation feel safe.

Psychological safety is not a soft concept. It is a hard requirement for AI adoption to work.

Process Design That Was Never Revisited

Automating a flawed process does not fix the process. It accelerates the flaw.

Real transformation means asking what a workflow should look like once AI handles the parts that do not require human judgment. That question requires redesign, not just automation. It is harder work, it takes longer, and it is the work that produces durable results.

Employee Fear and Resistance The Part Nobody Talks About

Most AI implementation guides focus on roadmaps, tools, and timelines. Very few address what is actually happening inside people’s heads when they hear their company is rolling out AI.

The emotional progression is predictable. It starts with skepticism (‘this probably won’t affect my work’). Then it shifts to anxiety (‘what exactly is going to change?’).

Then resistance (‘I don’t have bandwidth for this right now’). Some people make it to genuine curiosity. Many do not especially when the communication from leadership has been vague or optimistic in a way that rings false.

BCG’s AI at Work data shows something counterintuitive: the more deeply AI is integrated into an organization, the more anxious employees tend to become not less. Familiarity does not automatically reduce fear. What reduces fear is transparency about what is changing, honest answers to ‘what happens to my role,’ and visible evidence that leadership sees AI as something that helps people rather than replaces them.

What Actually Moves the Needle on Employee Resistance

- Tell people what is changing before it changes vague announcements create worse anxiety than honest specifics

- Separate the conversation about efficiency from the conversation about jobs conflating them immediately triggers defensive behavior

- Give employees ownership over how AI integrates into their own work people who help design the change are far less likely to resist it

- Share early wins from real colleagues, not vendor case studies peer proof carries more weight than external data

- Create a named channel or person employees can ask questions to without judgment

Middle Management The Adoption Layer Nobody Fixes

C-suite leaders set the AI agenda. Frontline employees do the day-to-day work with AI tools. The layer in between middle managers is where most adoption either accelerates or quietly dies.

Middle managers are in an uncomfortable position during AI transformation. Their traditional value has come from synthesizing information across their team and coordinating decisions upward. AI handles both of those functions more quickly than a person does.

Rather than openly resisting AI, threatened middle managers tend to create procedural friction. They add review steps. They hold back on endorsing tools. They frame AI initiatives as ‘things we’ll look at next quarter.’ None of this looks like resistance. It just looks like a busy manager with priorities.

How to Turn This Layer Into an Asset

- Get managers involved in shaping AI initiatives before rollout not as recipients of decisions, but as contributors to them

- Show managers explicitly how AI adoption in their team improves their own performance metrics

- Give them specific AI tools that reduce the administrative side of managing approvals, status updates, reporting

- Create recognition for managers whose teams hit strong adoption targets

The organizations that succeed at AI transformation tend to treat middle managers not as a neutral layer but as the most important adoption lever in the building.

Why AI Governance Is Different From Regular IT Management

Standard software behaves consistently. The same input produces the same output every time. You can test it once, document the behavior, and manage it with a checklist.

AI systems do not work that way. They are built on patterns in data, which means their outputs shift as the data environment changes. A model that performs well under one set of conditions may produce unreliable results when conditions shift without making any obvious error.

That unpredictability is not a defect. It is the nature of how AI learns. But it does mean that every governance practice built for conventional software needs to be rethought for AI.

| Conventional Software Management | What Changes with AI |

| Test once, document, deploy | Test continuously AI behavior drifts over time |

| Clear right/wrong outputs | Probabilistic outputs results exist on a spectrum of confidence |

| Accountability sits with the developer | Accountability must be assigned across business, legal, and IT |

| Compliance checked at implementation | Compliance must be monitored on an ongoing basis |

The organizations building lasting AI capability are the ones that recognize this shift early and design governance structures around it rather than discovering the gap after a costly mistake.

Three Things Every Organization Needs Before Scaling AI

Governance sounds like overhead. Done badly, it is. Done well, it is the thing that lets everyone else move faster because the rules are clear.

1. Data You Can Actually Trust

AI is entirely dependent on the quality of what it learns from. An organization that feeds AI inconsistent, incomplete, or poorly governed data will get inconsistent, incomplete, and unreliable AI outputs. That is not a technology problem. That is a data quality problem.

Before scaling any AI initiative, it is worth asking three questions about the data it will use: Do we know where this data comes from? Do we know what happens when it is wrong? And do we know who owns it?

Many organizations cannot fully answer any of those questions. That is the actual starting point.

2. Clear Checkpoints for Human Review

Speed is one of the main things AI brings to operations. But speed without review creates a new category of risk errors that propagate at scale before anyone catches them.

Effective governance frameworks set specific points in any AI workflow where a human must review before the process continues. These are not approvals for their own sake. They are the moments where human judgment adds something that AI genuinely cannot context, ethical consideration, knowledge of what is at stake.

Which decisions need a human checkpoint? Every organization has to answer this for itself. But the organizations that answer it explicitly, before deployment, are the ones that avoid the expensive mistakes.

3. A Plan for Unauthorized AI Use

Gartner survey of 302 cybersecurity leaders found that 69% of companies had identified employees using unauthorized AI tools and Gartner now predicts that by 2030, 40% of enterprises will have experienced a security or compliance incident tied to what they call ‘shadow AI.’

This is not primarily a discipline problem. Employees use unauthorized AI tools because they have a real need that the approved toolset is not meeting. Blocking access addresses the symptom. Understanding and addressing the need is the actual fix.

Organizations that offer well-governed, genuinely useful AI tools internally see unauthorized usage drop significantly not because of enforcement, but because the alternative is better.

EU AI Act What US Organizations Need to Know

The EU AI Act reached full enforcement in 2026. For US companies that operate in European markets, serve EU customers, or process data belonging to EU citizens, this law has direct operational implications.

The Act distinguishes between AI systems by risk level. High-risk applications those involved in hiring decisions, credit assessments, healthcare, education, and law enforcement face the strictest requirements. If your AI sits in any of these categories, you need four things in place:

- A full inventory of every AI system your organization runs

- A documented risk assessment for each one

- A mechanism for human oversight that can intervene when needed

- Transparency records explaining how decisions are made

The more useful frame here is not compliance anxiety but operational clarity. Organizations that can accurately list what AI systems they are running, what those systems decide, and who is accountable in a form that regulators can review are also the organizations that have the information they need to manage AI effectively regardless of jurisdiction.

People First Framework for AI Transformation 5 Steps

Based on INSEAD’s research into real-world AI deployments and BCG’s analysis of what separates AI leaders from AI laggards, here is a framework built around the human side of AI transformation.

Step 1: Build a Shared Foundation First

One of the most consistent findings from organizations that have successfully scaled AI is that they invested heavily in AI literacy across all levels before rolling out a single major tool.

This does not mean everyone becomes a data scientist. It means that a board member understands what a large language model can and cannot do. A frontline worker knows which tasks AI will handle and which ones remain theirs. A middle manager can make a reasonable decision about which AI tools are appropriate for their team.

Without that foundation, every AI rollout starts from confusion. With it, rollouts start from clarity.

Step 2 : Address Fear Before Rolling Out Tools

Fear is most damaging when it goes unaddressed. The best time to talk about job security, role changes, and what AI is going to mean for different positions is before the tools arrive not three months after adoption has already stalled.

Organizations that have handled this well tend to share one thing in common: leaders who named the fear out loud. Not to dismiss it, but to respond to it honestly. The message that actually reduces resistance is not ‘don’t worry, AI won’t replace you.’ It is ‘here is what is changing, here is why, and here is how we are thinking about your role through that change.’

Step 3: Make Human Work Worth More

When AI takes on the repetitive parts of a job, the question that follows is: what does the person now do with that time? If the answer is ‘more of the same kind of work, just faster,’ that is not transformation. That is acceleration.

The organizations seeing the most durable value from AI are the ones that actively redesign roles around what humans are still distinctly better at judgment, creativity, relationship, and context. That redesign requires intentional management, not just access to AI tools.

Step 4: Design Human and AI Work Together

The highest-value AI deployments are not ones where AI runs autonomously and humans check in occasionally. They are ones where workflows are deliberately designed so that AI contributes what it is good at and humans contribute what they are good at and the handoffs between those contributions are clear.

This requires workflow redesign, not just tool deployment. It takes longer up front. It produces outcomes that last.

Step 5: Move Before You Are Certain

Every organization that has built a genuine AI capability started earlier than they felt ready. JPMorgan, for example, began internal AI experiments before generative AI had reached mainstream awareness. By the time most financial institutions were trying to figure out where to start, JPMorgan had already built internal expertise, identified what worked, and established the governance frameworks that would let them scale responsibly.

Waiting for the technology to mature before beginning the people and culture work is the single most common strategic mistake in AI transformation. The technology will keep changing. Start building the human side now.

Building an AI-Ready Culture Phase by Phase

Culture change happens in layers. You cannot announce it into existence. Here is a realistic phase structure that organizations have used to build AI readiness without overwhelming their teams.

Phase 1 — Awareness (Months 1 to 2)

- Run role-specific AI literacy sessions not one-size-fits-all presentations

- Share concrete examples of AI use in your industry real outcomes, not vendor demos

- Open a low-stakes space (Slack channel, internal forum) where employees can ask questions without judgment

Phase 2 — Experimentation (Months 2 to 4)

- Pick one team and one well-defined problem give them a specific AI tool and a specific goal

- Define success in business terms before starting not ‘we used the tool’ but ‘we cut processing time by 25%’

- Share what you learn openly including what did not work so the rest of the organization learns from the pilot

Phase 3 — Integration (Months 4 to 12)

- Identify the employees who took to AI naturally in the pilot and formally make them internal champions

- Build out role-specific guidance documents based on what the pilot taught you

- Establish a Center of Excellence with clear ownership of AI standards and governance

- Connect AI adoption metrics to team performance conversations not as a penalty, but as a measure of progress

None of this is fast. Cultural change on this scale typically takes 6 to 12 months to take hold. Organizations that try to compress it usually end up repeating it.

Common AI Problems Organizations Run Into

Even organizations that have done the preparation work hit recurring issues. Here are the four most common ones, and what they actually signal.

1. Pilots That Never Become Products

A pilot delivers promising numbers. Leadership celebrates. The pilot then sits in limbo for months or years without scaling. BCG specifically named this ‘pilot purgatory’ as one of the central obstacles to value creation in AI.

The cause is almost always the same: the pilot proved the technology works, but no one prepared the organizational conditions data infrastructure, process design, change readiness needed to scale it. Treat pilots as proof of organizational readiness, not just technical capability.

2. Skills Gaps That Training Courses Don’t Fill

AI skills gaps are real. But the instinct to solve them purely through courses and certifications misses something important. Most organizations do not need everyone to become technically proficient. They need teams that know how to work alongside AI how to frame problems for it, how to evaluate its outputs, how to recognize when it is wrong.

That kind of AI fluency is built through doing, not through coursework. The most effective upskilling happens inside real work on real tools, with coaching from people who have already figured out the learning curve.

3. Ethical Risks That Surface Too Late

Amazon’s recruiting AI was eventually retired in 2018 after it was found to systematically score male candidates higher than equally qualified women. The model had learned this pattern from years of historical hiring data in which men were hired more frequently. No one had checked whether the data it learned from reflected the outcomes the company actually wanted.

Building an ethics checkpoint into AI development is not a theoretical exercise. It is the question of whether anyone reviewed what the AI learned to value before deploying it in a context where that learning causes harm.

4. Legacy Systems That Were Never Designed to Talk to Anything New

Most large organizations carry technical infrastructure built 15 to 20 years ago. Connecting modern AI tooling to that infrastructure is slow, expensive, and often requires tradeoffs between speed and data integrity.

The practical advice here is narrow scope, not grand ambition. Start with AI use cases that can run in parallel with, or entirely separately from, legacy systems. Build on success. Do not attempt to modernize the entire infrastructure stack while simultaneously learning how to run AI at scale.

What Real AI Transformation Looks Like Wins and Failures

What Worked: Microsoft’s Approach to Copilot Rollout

When Microsoft introduced AI assistant features across its core productivity tools Word, Excel, Outlook, and Teams the adoption numbers held up in a way that most enterprise AI rollouts do not. One reason stands out: Microsoft embedded AI into software people already used every day. The friction was minimal because the context was familiar.

But the less visible part of the story is that Microsoft paired the tool rollout with a substantial internal training program designed specifically to help employees understand what the tools could do and, equally important, what they should not be used for. That combination familiar context, honest capability education is the pattern that drives durable adoption.

What Worked: JPMorgan’s Early Investment in Experimentation

JPMorgan began internal AI experiments well before the public launch of large language model tools made AI a mainstream business topic. Their approach was to give business units room to experiment while pairing that freedom with governance and frameworks that allowed successful experiments to scale.

When the wider financial services industry began seriously thinking about AI strategy, JPMorgan already had working systems, governance structures, and internal expertise. The head start came not from better technology access, but from starting the organizational learning earlier.

What Did Not Work: IBM Watson Health

IBM’s Watson Health project was one of the most publicized AI efforts in healthcare history. IBM invested billions to position Watson as a tool for cancer diagnosis and treatment planning. The project was wound down in 2022 without delivering the outcomes it had promised.

The reasons were human, not computational. The data Watson was trained on was not representative of real clinical practice. Oncologists did not trust its recommendations enough to act on them. Clinical workflows were never redesigned to integrate AI inputs into treatment decisions. The system worked technically but was never actually embedded into the practice of medicine.

What Did Not Work: Amazon’s Candidate Screening Model

Amazon built a machine learning model to assist with hiring by scoring candidate applications. The model was trained on years of historical applications and hiring decisions data from a period in which the company hired predominantly male candidates.

The model learned that pattern and replicated it. Applications from women were scored lower. The tool was decommissioned in 2018 when the bias was discovered. The lesson the case offers is specific: an AI model reflects the priorities embedded in its training data. Checking whether that training data reflects the outcomes you actually want is not optional

AI Transformation Readiness Self-Assessment

Rate each item 1 to 5, where 1 means this is not in place and 5 means this is fully operational. Maximum score is 70. Use the scoring guide below.

| Score Range | What It Signals | Recommended Next Step |

| 57–70 | Strong foundation across all four dimensions | Begin or accelerate scaling governance and culture are solid |

| 43–56 | Uneven readiness some areas strong, others at risk | Identify your two weakest areas and address those before scaling |

| Below 43 | Foundational gaps in multiple areas | Do not attempt large-scale AI rollout fix foundations first |

Leadership and Strategy

- There is a defined AI strategy that connects to specific business goals not just a general intention to ‘use AI’

- At least one named senior leader has accountability for AI outcomes

- We have a structure (CAIO, AI Board, or Steering Committee) that resolves AI trade-offs across departments

People and Culture

- Employees at all levels have received role-specific AI education, not generic awareness training

- Managers feel involved in AI strategy, not just notified of it

- There is a named process for employees to raise concerns about AI tools

- Early AI adopters are recognized not just those with technical roles

Data and Governance

- Data used in AI applications has been audited for quality and consistency

- There is a documented policy on what AI tools employees can and cannot use

- High-risk AI decisions have a defined human review checkpoint before action is taken

- An ethics review process exists for any new AI application that affects people

Process and Implementation

- At least one AI initiative has been scoped around a specific problem before a tool was chosen

- AI pilots are evaluated in business outcome terms, not technology performance terms

- At least one major workflow has been redesigned around AI rather than just having AI added to it

How to Actually Make AI Transformation Work 5 Steps

Step 1 — Start with the Outcome, Not the Tool

Name a specific, measurable business problem that matters. Then identify whether AI is the right approach for it. This order outcome first, tool second is one of the clearest separators between organizations that generate value and those that accumulate unused licenses.

Step 2 Do the People Work Before the Technology Work

Change management is not the soft part. It is the harder part, and it takes longer. Starting it earlier than feels necessary is almost always the right call. The organizations that get AI adoption right tend to have spent six to twelve months building readiness before the tools even arrived.

Step 3 — Clean Your Data Before Trusting Your AI

Run an honest audit of the data your AI is going to rely on. Where did it come from? How often is it updated? Who is responsible for it when it is wrong? These are operational questions, not academic ones. The answers determine whether your AI is usable.

Step 4 — Redesign the Work, Not Just the Tool

Ask what a workflow should look like if AI is handling the parts that do not require human judgment. Design from that vision outward. Do not design AI into the gaps of an existing process design the process around what AI and humans together can actually do.

Step 5 Measure What the Business Cares About

The metrics that matter are adoption rate, error reduction, time saved per outcome, cost per decision, and employee sentiment. The metrics that do not tell you enough on their own are model accuracy, uptime, and number of tools deployed. Close the gap between technical performance metrics and business outcome metrics, and you will have a much clearer view of what your AI is actually doing for you.

Governance Is Not the Brake It Is the Guardrail

The organizations that treat AI governance as a blocker tend to have confused governance with bureaucracy. Governance, done properly, is what allows an organization to move faster with confidence because the rules are set, responsibilities are clear, and teams are not stopping to ask permission for every decision.

There is also a direct financial argument. Without centralized AI governance, different departments independently buy different AI tools. None of those tools connect to each other. Data sits in separate silos. You pay for overlapping capabilities while capturing none of the integration value. A governance framework replaces that fragmentation with a unified architecture where each AI investment can build on the others.

The organizations winning with AI in 2025 and 2026 are not necessarily the ones that moved fastest. They are the ones that combined reasonable speed with deliberate structure governance that enabled action rather than slowing it down.

FAQs

Is AI transformation a technology problem?

No. According to BCG’s research across more than 1,000 senior executives, about 70% of the challenges in AI transformation relate to people and process. Only around 10% trace back to the AI algorithms themselves. The technology is rarely the limiting factor.

Why do AI transformations fail?

The most consistent reasons are: lack of clear business goals before tools are chosen, insufficient investment in employee readiness, processes that are automated rather than redesigned, and data that is not reliable enough to support AI outputs. Leadership misalignment approving AI without owning it is also a major factor.

What is the difference between AI transformation and digital transformation?

Digital transformation is the broader shift to technology-enabled operations. AI transformation is a specific layer within that focused on embedding learning and intelligence into business decisions and workflows. The key difference is that AI transformation changes how an organization reasons, not just what tools it uses.

How do you build an AI-ready culture?

Start with role-specific education before any tools arrive. Address employee fears openly and early. Give people a real role in redesigning their work with AI. Recognize early adopters. Measure cultural progress alongside tool adoption. Expect the process to take 6 to 12 months to take hold.

What is the role of leadership in AI transformation?

Leadership needs to define the strategic purpose of AI not just approve the budget for it. They need to fund change management, communicate the rationale for AI adoption clearly and honestly, and model the behavior they want from others. Without visible leadership ownership, AI transformation typically stalls at the proof-of-concept stage.

How do you measure AI transformation success?

Measure in business outcomes: cost reduction per decision, time saved on specific processes, reduction in error rates, customer satisfaction changes, and adoption percentage by role. Pure technology metrics like model accuracy or system availability are necessary but not sufficient they do not tell you whether AI is actually changing the business.

Conclusion

The organizations that have built durable AI capability share a common pattern, and it is not the one most people expect. They did not have the newest tools. They did not have the largest AI teams. What they had was clarity about what they were trying to accomplish, leadership that took personal ownership of the human side of the change, and a genuine willingness to redesign work rather than just automate it.

I have watched organizations with mid-range technology budgets outperform significantly better-resourced competitors because they understood one thing their competitors did not: the hard part of ai transformation not technology problem and it cannot be outsourced to a vendor.

The companies that struggle longest are almost always the ones that treated AI as an IT initiative something to be delivered, documented, and handed over.

AI transformation is not delivered. It grows, slowly, through repeated practice, honest communication, and a management culture that is willing to sit in the discomfort of change long enough to come out the other side. If you are at the beginning of that journey, the most useful thing you can do right now is not pick a platform.

It is have an honest conversation with your team about what they actually need, what they are worried about, and what kind of support would make them more willing to try. Start there. The technology will follow.