Your AI demo just worked perfectly. Everyone loved it. Then you tried using it across your whole company. Everything crashed. Why does this keep happening?

AI transformation is a problem of governance, not technology. Your AI tools work fine. Your tech team did a great job. What’s missing? The rules and systems to manage these powerful tools properly.

Most companies focus on finding the fastest AI. They ignore the bigger issue: how to control and manage these systems safely. This guide shows you exactly how to fix this problem.

Why AI Projects Keep Failing (It’s Not Your Technology)

Imagine buying a super-fast race car. But you forget to add brakes. Or a steering wheel. Sounds crazy, right?

That’s what most companies do with AI.

They want the fastest tools. They want the newest technology. But they skip the control systems. The result? You have power but no direction. Projects fail. Teams get frustrated. Money gets wasted.

This is why AI transformation is a problem of governance. Speed means nothing without control.

The Shocking Numbers

Boston Consulting Group found something surprising:

• 70% of AI problems come from people and process issues

• Only 22% of companies make money from AI

• Just 4% create real business value

Why such a huge gap? Companies treat AI like regular software. They think they can just install it and go. But AI needs different management. It needs governance.

Real Stories of What Goes Wrong

Here’s what happens in real companies:

Marketing Disaster: A team used AI to write customer emails. Nobody checked what data the AI used. The AI accidentally sent private financial info to thousands of customers. The company got sued.

Hiring Mistake: HR used AI to screen job applications. The AI learned from old data that favored men. Now it rejects qualified women automatically. The company faces discrimination lawsuits.

Shadow AI Problem: Employees used ChatGPT for work. They pasted company secrets into it. Nobody noticed until a competitor somehow knew their strategy.

All these problems could have been stopped with proper governance. This proves AI transformation is a problem of governance, not technology.

How AI Is Completely Different from Regular Software

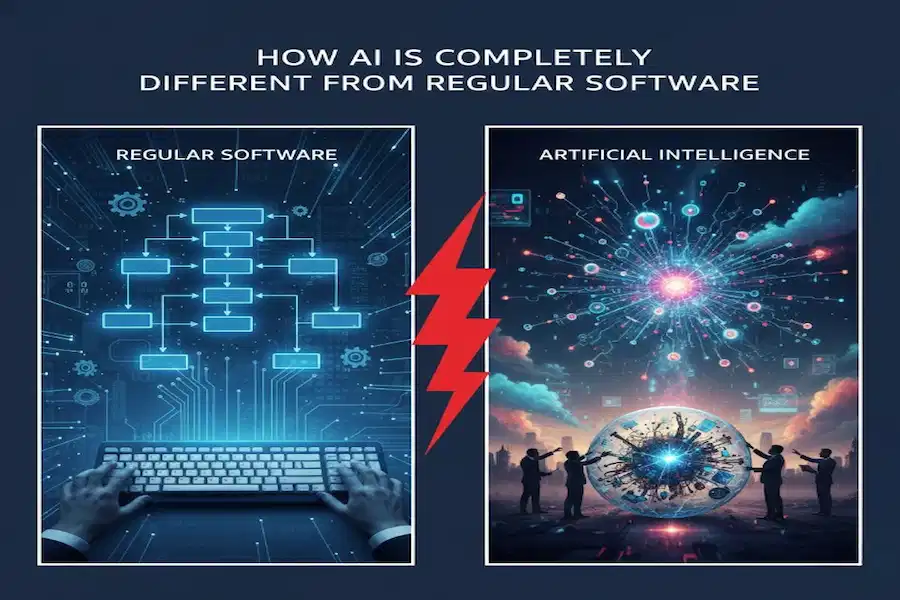

Regular software does the same thing every time. Excel works the same on Monday and Friday. Your email never changes how it works.

AI? Completely different.

AI learns from data. It changes based on what it sees. What worked yesterday might give weird results today. This is why AI transformation is a problem of governance. You need different rules for things that change and learn.

| Regular Software | AI Systems |

| Does exactly what you tell it | Learns and changes on its own |

| Same result every time | Different results based on data |

| Easy to understand | Sometimes mysterious |

| One person responsible | Unclear who’s accountable |

Your old IT rules don’t work for AI. You need new rules for systems that change and learn.

Learning Problem

Think of it this way:

Regular software is like a calculator. You press 2 + 2 and always get 4. It never changes.

AI is like a student. It learns from examples. Good examples? It learns well. Bad examples? It learns bad habits. Sometimes it learns things you never meant to teach it. That’s why governance matters. Someone needs to watch what AI learns.

Three Things Every Company Needs Right Now

AI transformation is a problem of governance. Here are the three building blocks you must have:

1. Control Your Data Properly

The best AI becomes useless with bad data. Or worse, dangerous. Governance creates clear rules for handling information:

• Where did this data come from?

• Who can see and use it?

• How long do we keep it?

• What data is too sensitive for AI?

• Is the data accurate and fair?

Real example: Your marketing team wants AI to personalize emails. Great! But governance stops them from uploading sensitive financial data to a public chatbot. It turns vague privacy ideas into hard, specific rules.

2. Keep Humans in Charge

We’re moving toward AI that takes actions by itself. This creates serious risks. Good frameworks decide exactly where humans must stay in control:

• AI can write code → Humans check before it goes live

• AI can summarize meetings → Humans review before sharing

• AI can suggest job candidates → Humans make final decision

• AI can recommend treatments → Doctors have final say

These checkpoints aren’t bottlenecks. They’re safety valves that prevent terrible mistakes.

3. Stop Shadow AI Chaos

Your employees want to help. So they’re:

• Pasting confidential notes into ChatGPT

• Using random image creators for presentations

• Feeding customer data into unapproved tools

This shadow AI might be your biggest security threat today.

Simply blocking websites rarely works. Smart governance asks: Why are employees doing this? Then it provides secure, approved tools that actually meet their needs. If people sneak around your rules, your rules are probably the problem.

New Laws That Change Everything

EU AI Act of 2026

For years, AI regulations were something coming someday. That day is here. The EU AI Act became fully active this year. It has penalties as big as GDPR fines.

This isn’t suggestions. It’s law with real consequences. This proves AI transformation is a problem of governance, not just technology.

What High Risk AI Must Do Now

The Act targets AI in critical areas: education, employment, law enforcement, and credit scoring. If you work in or sell to the EU, you need these now:

• Complete inventory: List every AI system you use

• Risk assessments: What could go wrong with each?

• Human oversight: Let people intervene when AI makes mistakes

• Transparency: Explain how systems make decisions

You can’t govern what you don’t know you have. That inventory becomes your foundation.

Problem with Different Countries Having Different Rules

While Europe has clear laws, the rest of the world takes different approaches:

• China: Strict content controls and government oversight

• United States: Different rules for different industries

• European Union: One big framework for all high-risk AI

• Gulf Region: Still creating rules while investing billions

A global company might need three or four different governance strategies. Annoying? Yes. But it’s reality. This means you can’t build one framework and be done. You need flexible structures.

Why AI Governance Is So Hard to Do

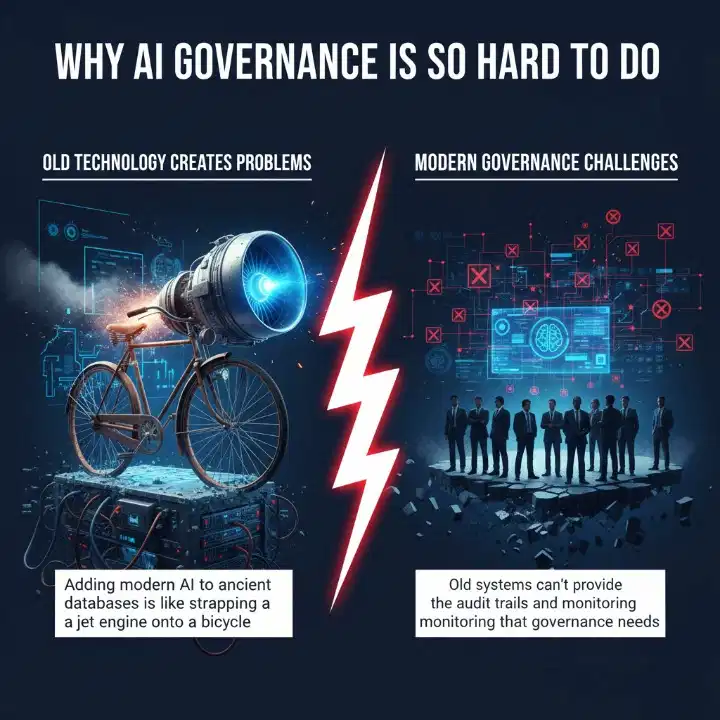

If governance is so important, why aren’t companies just doing it? Because it’s incredibly difficult. Here’s why AI transformation is a problem of governance in practice:

Old Technology Creates Problems

Most large companies run on systems built 20 years ago. Adding modern AI to ancient databases is like strapping a jet engine onto a bicycle. It doesn’t work well. Old systems can’t provide the audit trails and monitoring that governance needs.

Nobody Knows Who Should Be in Charge

Who should run these programs?

• Lawyers don’t understand the code

• Engineers don’t understand legal requirements

• Business leaders want speed, not guardrails

• IT security teams are already overwhelmed

There’s a huge shortage of people who understand both AI and regulations. Until companies invest in this expertise, governance efforts will struggle.

Company Culture Fights Against Governance

In many companies, the governance team gets labeled the Department of No. They’re seen as obstacles. This view is backwards. Good governance is like guardrails on a mountain road. They let you drive faster safely. Until culture shifts to view governance as helpful, resistance will keep undermining it.

What’s Happening Right Now in 2026

ISO/IEC 42001 Becomes the Gold Standard

You’ll start seeing this number everywhere: ISO/IEC 42001. This global standard for AI management is becoming the certification companies want.

What makes it different? It focuses on ethics by design. This means building responsible practices into code from day one, not checking ethics after.

It requires teams from different departments working together:

• Technology teams

• Legal departments

• Human resources

• Business leadership

• Risk management

This team approach ensures all perspectives shape how AI gets built and used.

Major Companies Face Real Consequences

Several big companies have already been fined millions:

• Banks fined for lending AI that discriminated

• Tech companies penalized for privacy violations

• Retailers sued for biased hiring algorithms

These aren’t theoretical risks. This is real money being lost right now. This proves AI transformation is a problem of governance.

Why Governance Actually Makes You Move Faster

Here’s something that seems backwards Companies with strong governance often move faster than competitors. How? They’ve established clear rules. Their teams can work with confidence instead of constantly worrying about violations or disasters. Companies without governance find themselves stalled by risks they can’t name.

Money Argument

People usually argue for governance to avoid lawsuits. But there’s a stronger business reason: money. Ungoverned AI wastes money.

Without central strategy:

• Marketing buys an AI copywriter

• Sales buys an AI email tool

• HR buys an AI recruiter

• IT buys an AI coding assistant

None of these talk to each other. All create data silos. You’re paying for the same thing four times. Governance creates unified architecture. Leadership can measure actual ROI because everyone uses the same rules.

Step by Step Plan for Building Governance

Stop launching more test projects. Here’s what to do instead. Remember, AI transformation is a problem of governance, so focus on these governance steps:

Step 1: Pick One Important Process

Don’t try governing ten things at once. Choose one workflow where speed or risk truly matters. Like processing orders, handling claims, or onboarding customers.

Step 2: Map Your Current Workflow Honestly

Find out where decisions happen. Where work gets stuck. Where human judgment is truly needed versus just habit. No pretending things work better than they do.

Step 3: Define Your Operating Principles

Create a short list that helps you make tough choices:

• What must never be sacrificed? (Safety, trust, privacy)

• What comes second? (Ethics, fairness, transparency)

• What’s legally required? (Compliance)

• What should the system optimize? (Speed, value, productivity)

Step 4: Redesign the Whole Process

Assign clear roles to humans and AI. Remove unnecessary steps. Build paths for unusual cases. This isn’t about adding AI to old processes. It’s about reimagining the entire workflow.

Step 5: Measure Real Results

Tie your governance to real business value. Track error rates, compliance issues, time-to-value, and actual profit. Not just number of AI tools deployed.

What Winning Companies Look Like

Common Success Patterns

Companies succeeding with AI share these traits. They don’t have the newest tech or biggest budgets. What they do have:

• Clear accountability: Everyone knows who’s responsible

• Transparent decisions: Teams understand and trust the process

• Regular reviews: Governance changes as tech and rules change

• Executive commitment: Leaders treat governance as advantage

• Cross-team work: Breaking walls between departments

These companies view governance as competitive advantage. While competitors struggle with fears and incidents, they move confidently.

Real Examples

Financial Services: Created a central AI board that reviews all high-risk apps. Result? They deployed AI 40% faster because teams knew exactly what approvals they needed.

Healthcare Provider: Built strict data governance for patient info. Their AI tools won regulatory approval quickly because docs were already perfect.

Retail Chain: Created approved tools with proper guardrails. Employees stopped using shadow AI because the official tools worked better.

Common Mistakes to Avoid

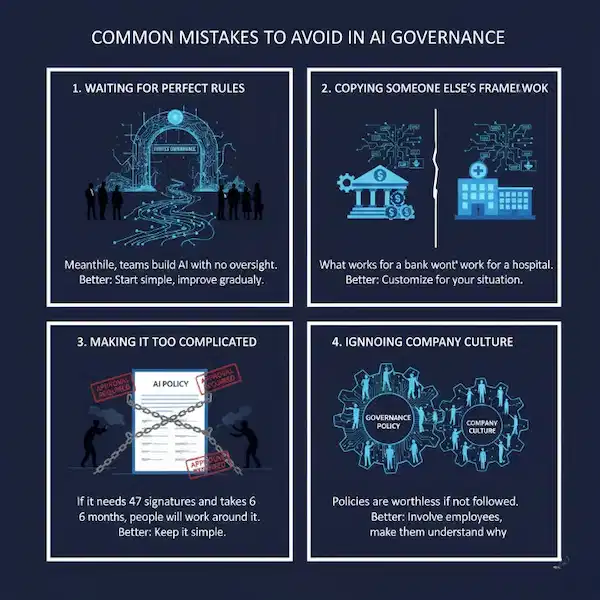

Waiting for Perfect Rules

Some companies spend years debating perfect governance. Meanwhile, teams build AI with no oversight. Better approach: Start with basic rules and improve them. Good enough now beats perfect never.

Copying Someone Else’s Framework

What works for a bank won’t work for a hospital. What works for a huge company won’t work for a startup. Better approach: Learn from others but customize for your situation.

Making It Too Complicated

If your governance needs 47 signatures and takes 6 months, people will work around it. Better approach: Keep it as simple as possible while still managing risks.

Ignoring Company Culture

You can create perfect policies on paper. If people don’t follow them, they’re worthless. Better approach: Involve employees in designing governance. Make sure they understand why rules exist.

Building the Right Team

Who You Need

• AI Ethics Officer: Thinks about fairness and doing right

• Data Lead: Controls quality, access, and privacy

• Risk Manager: Finds what could go wrong

• Legal Counsel: Knows laws and compliance

• Technical Expert: Understands how AI works

• Business Rep: Makes sure governance helps business goals

Skills They Need

The ideal person:

• Understands AI basics (doesn’t need to code)

• Knows relevant laws

• Can manage risks and think ahead

• Reasons through ethical problems

• Understands business needs

• Communicates clearly to everyone

Finding people with all these skills is hard. That’s why governance usually needs a team with different strengths.

Training Your Organization

Different people need different training:

• Executives: Why governance matters, key risks, their duties

• AI Practitioners: Responsible AI, bias testing, documentation

• Business Users: How to use AI properly, spot problems

• Everyone: Basic AI knowledge, company policies, ethics

Measuring If Your Governance Works

Important Numbers to Track

Process Metrics:

• AI with complete docs: Target >95%

• Training completion: Target 100%

• Review time: Track and reduce delays

• Policy compliance: Target >90%

Risk Metrics:

• Governance violations: Should go down

• Incidents needing escalation: Should decrease

• Bias audit pass rate: Target >90%

• Security issues found early: Better than finding late

Value Metrics:

• New AI uses enabled: Governance should enable, not just block

• Time from dev to launch: Balance speed and safety

• Customer satisfaction with AI: The ultimate goal

• Business value from AI: Real revenue and savings

Regular Check Ups

• Monthly: Review metrics, discuss new projects, fix issues

• Quarterly: Audit compliance, update policies, check progress

• Annually: Full review, strategic planning, major updates

The Future of AI Governance

What’s Coming Next

AI keeps advancing. This creates new governance challenges:

• Generative AI: Copyright questions, misinformation risks

• Foundation Models: Big AI that’s hard to fully test

• Autonomous Agents: AI that acts without asking first

• Critical Systems: Medical diagnosis, infrastructure, finance

Each advancement needs new governance thinking. This is why AI transformation is a problem of governance that keeps evolving.

Better Tools for Governance

Technology will also improve governance:

• AI-powered compliance checking

• Automatic bias detection

• Blockchain for tamper-proof records

• Better privacy techniques

• Improved explainability tools

These tools will make governance more effective and less work.

Countries Working Together

Regulations worldwide are starting to align:

• Risk-based approaches becoming standard

• Countries coordinating on basic principles

• More enforcement and bigger penalties

• Focus on real outcomes, not just paperwork

FAQs

Why is governance more important than having the best AI?

Even the best AI fails without proper governance. It’s like having a powerful car with no steering wheel or brakes. You’ll crash. Governance addresses who’s responsible, whether the AI is fair, what risks exist, and if you’re following laws. Technology alone can’t solve these issues.

How is AI governance different from regular IT rules?

Regular programs do exactly what you tell them, every time. AI learns from data and makes its own decisions, sometimes in surprising ways. Regular IT rules assume predictable behavior. AI governance must handle uncertainty, bias, explaining decisions, and watching systems that change over time.

What’s the first thing I should do?

Make a list of every AI tool your company uses. You can’t govern what you don’t know exists. Then find your highest-risk applications, those affecting jobs, money, health, or safety. Create basic policies for those first.

How much does AI governance cost?

Costs vary hugely based on company size and industry. You’ll need budget for staff, tools, training, and time. Think of governance costs as insurance. You pay some now to avoid much bigger losses later from lawsuits or failed projects.

Can small companies afford proper governance?

Absolutely! Small companies have advantages. Fewer systems to govern. Easier communication. Faster decisions. Start with lightweight processes focused on your biggest risks. Use free frameworks. Many governance principles cost almost nothing. They just require thinking carefully.

What if employees resist governance policies?

If people work around your rules, ask why. Usually it means your process is too slow or complicated. Involve employees in designing governance. Show how governance protects them. Provide approved tools that work better than unapproved ones. Make governance the easy path.

Conclusion

Let’s be clear: AI transformation is a problem of governance, not technology. The companies that win with AI won’t have the smartest algorithms. They’ll have the best governance. They’ll make AI work safely, fairly, and responsibly.

Good AI governance:

• Protects customers from unfair AI

• Keeps companies legal

• Builds public trust

• Lets you scale AI with confidence

• Prevents expensive failures

• Creates competitive advantage

The governance journey takes time. Usually years, not months. Most successful companies start small with their highest-risk AI. Then they expand as they learn.

Remember these key points:

• AI needs different governance than regular software

• Laws change fast, stay informed

• Ethics matter beyond just following rules

• Many people need to be involved

• Risk management never stops

• Data quality determines AI quality

• Use existing frameworks, don’t start from zero

• Tools help, but people and processes matter more

• Culture determines if governance succeeds

• Keep improving as AI evolves

Start your AI governance journey today. List what AI you’re using. Find your biggest risks. Create basic practices. Perfect governance isn’t the goal. Good enough governance that protects you while enabling innovation is what matters.

The future belongs to organizations that master AI governance. They’ll build amazing AI while treating people fairly, protecting privacy, following laws, and building trust. That’s the real competitive advantage.

Your competitors are figuring this out right now. Will you lead this transformation? Or will you scramble to catch up after they’ve already won? Remember: AI transformation is a problem of governance. Start solving it today.